Modern cloud-native applications are no longer built as monoliths. Instead, they consist of dozens—or even hundreds—of small, independently deployable services that communicate over the network. While this microservices architecture enables agility and scalability, it also introduces significant operational complexity. Ensuring reliable, secure, and observable communication between services quickly becomes one of the biggest engineering challenges teams face.

TLDR: Service mesh tools like Linkerd simplify communication between microservices by managing traffic, security, and observability at the infrastructure level. Instead of building complex networking logic into each service, a service mesh handles encryption, retries, load balancing, and metrics automatically. This reduces operational overhead, improves reliability, and enhances visibility across distributed systems. Tools such as Linkerd, Istio, and Consul each offer unique strengths depending on organizational needs.

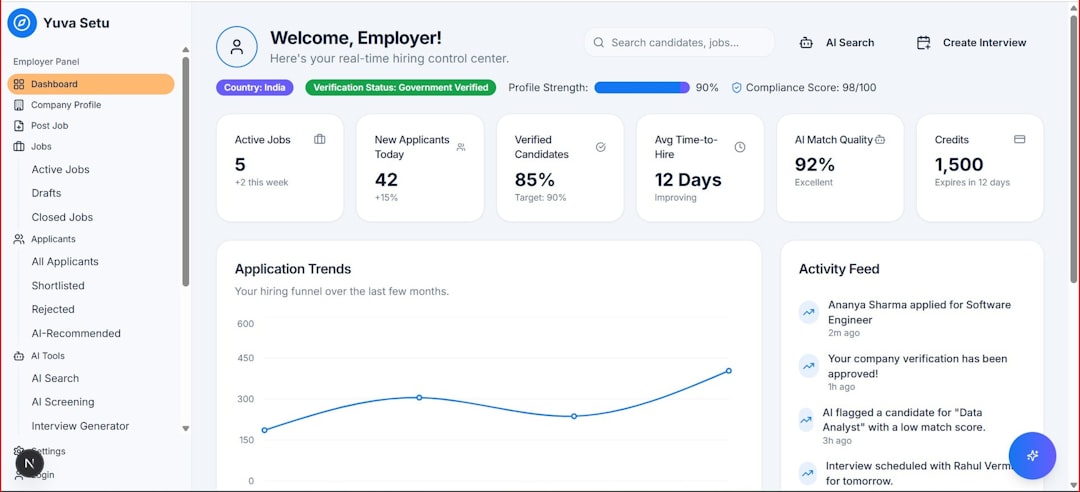

What Is a Service Mesh?

A service mesh is an infrastructure layer designed to control and observe service-to-service communication in distributed applications. Rather than embedding networking logic directly into application code, a service mesh handles it externally—usually through lightweight network proxies deployed alongside each service instance.

In simpler terms, it acts as a traffic manager for your microservices.

Key responsibilities of a service mesh typically include:

- Service discovery

- Load balancing

- Traffic routing

- Encryption (mTLS)

- Retries and failover

- Observability and metrics

Instead of coding these functions repeatedly across services, developers can rely on the mesh to handle them uniformly and reliably.

Why Microservices Need a Service Mesh

In a monolithic architecture, service communication often happens internally within the same application process. In microservices, however, communication happens across networks—introducing latency, packet loss, and security concerns.

Consider the challenges:

- Services must discover each other dynamically.

- Failures must be handled gracefully with retries and backoffs.

- Traffic spikes must be load-balanced.

- Data must be encrypted in transit.

- Performance bottlenecks must be diagnosed quickly.

Implementing these capabilities manually leads to duplicated code, inconsistent behavior, and security risks. A service mesh centralizes and standardizes this logic.

How Linkerd Helps Simplify Service Communication

Linkerd is one of the earliest and most lightweight service mesh tools available. Known for its simplicity and performance, Linkerd focuses on reducing operational complexity while providing essential service mesh features.

1. Lightweight Architecture

Unlike some feature-heavy solutions, Linkerd prioritizes stability and ease of use. It uses ultra-light Rust-based proxies and is designed to have minimal impact on resource consumption.

This makes it ideal for teams that want:

- Fast installation

- Low operational overhead

- High performance with minimal latency

2. Automatic mTLS Encryption

Security in distributed systems is non-negotiable. Linkerd automatically enables mutual TLS (mTLS) between services without requiring code changes. This ensures:

- Encrypted communication

- Service identity verification

- Zero-trust network architecture

Developers can deploy services without worrying about managing certificates manually.

3. Powerful Observability

Linkerd provides baked-in metrics and visibility into service performance, including:

- Request success rates

- Latency percentiles

- Live traffic flows

- Error breakdowns

This visibility allows teams to detect and diagnose issues quickly—often before end users notice.

4. Progressive Delivery Support

With traffic splitting capabilities, Linkerd enables:

- Blue-green deployments

- Canary releases

- A/B testing

Teams can shift traffic gradually to new versions, reducing deployment risk.

Other Popular Service Mesh Tools

While Linkerd is known for simplicity, it is not the only option. Several service mesh tools serve different operational needs and complexity levels.

Istio

Istio is a feature-rich, enterprise-grade service mesh built initially in collaboration with Google, IBM, and Lyft. It offers:

- Advanced traffic routing rules

- Strong policy enforcement

- Extensive telemetry support

- Deep integration with Kubernetes

However, Istio can be complex to operate and requires careful configuration.

Consul Connect

HashiCorp’s Consul provides service discovery alongside mesh capabilities. It works well in:

- Hybrid environments

- Multi-cloud architectures

- VM and Kubernetes deployments

Consul integrates closely with other HashiCorp tools, making it attractive for infrastructure-heavy teams.

Kuma

Kuma, built by Kong, supports multi-zone deployments and runs across Kubernetes and virtual machines. It’s designed for organizations needing mesh capabilities beyond Kubernetes clusters.

Service Mesh Tools Comparison Chart

| Feature | Linkerd | Istio | Consul | Kuma |

|---|---|---|---|---|

| Ease of Installation | Very Easy | Moderate to Complex | Moderate | Moderate |

| Performance Overhead | Low | Medium | Medium | Medium |

| Built-in mTLS | Automatic | Configurable | Supported | Supported |

| Advanced Routing | Basic | Extensive | Moderate | Moderate |

| Best For | Simplicity and performance | Enterprise feature depth | Hybrid environments | Multi-zone deployments |

Core Benefits of Using a Service Mesh

1. Reduced Developer Burden

Developers can focus on building business logic without embedding networking code into services. This results in cleaner architecture and faster feature development.

2. Consistent Security Practices

By enforcing encryption and authentication centrally, a service mesh ensures compliance with modern security requirements—without additional developer effort.

3. Improved Reliability

Built-in retries, circuit breaking, and timeout management prevent cascading failures. These patterns dramatically improve system resilience.

4. Enhanced Observability

A service mesh provides standardized telemetry metrics across services. Unified dashboards allow operators to:

- Trace request paths

- Identify latency bottlenecks

- Monitor error rates

- Analyze traffic patterns

5. Controlled Traffic Management

Traffic shaping capabilities enable safe experimentation and gradual rollouts—without redeploying code.

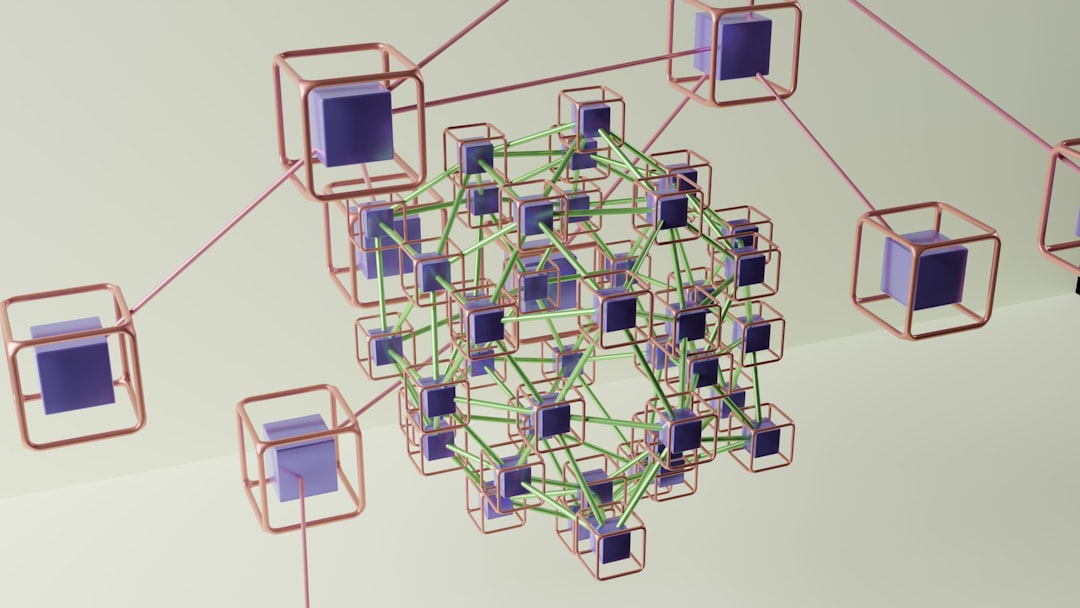

How Service Mesh Works Under the Hood

Most service meshes follow the sidecar proxy model. Each service runs alongside a small proxy container that intercepts inbound and outbound network traffic.

This proxy handles:

- Encryption

- Routing decisions

- Metrics collection

- Policy enforcement

The collection of these proxies forms the data plane, while a centralized control plane manages configuration and policy distribution.

When Should You Adopt a Service Mesh?

A service mesh adds value in environments where:

- You run large Kubernetes clusters.

- You manage dozens of interdependent services.

- You require strict zero-trust security.

- You need advanced observability.

- You deploy frequently using canary or blue-green strategies.

However, for small systems with only a handful of services, implementing a service mesh may introduce unnecessary complexity. As with any infrastructure tool, it should solve real problems—not create new ones.

Best Practices for Implementing Linkerd

- Start small: Deploy the mesh in a staging environment first.

- Incremental onboarding: Gradually inject services rather than migrating everything at once.

- Monitor performance: Measure before and after metrics to understand impact.

- Educate teams: Ensure developers understand how traffic flows through the mesh.

- Automate certificate rotation: Leverage built-in identity management features.

The Future of Service Mesh Technology

As distributed systems evolve, service meshes are expanding beyond Kubernetes. Emerging trends include:

- Multi-cluster federation

- Service mesh interfaces (SMI) standards

- Reduced resource overhead

- Improved developer tooling

Tools like Linkerd continue to prioritize simplicity and performance, while the broader ecosystem moves toward better interoperability and reduced operational barriers.

Final Thoughts

Managing microservice communication at scale is inherently complex. Service mesh tools like Linkerd provide a powerful yet streamlined way to handle networking, security, and observability concerns without overburdening development teams.

By abstracting service-to-service communication into a dedicated infrastructure layer, organizations gain:

- Better reliability

- Stronger security

- Clearer observability

- Safer deployments

Whether you choose Linkerd for its simplicity, Istio for its deep feature set, or another mesh tailored to your infrastructure, the ultimate goal remains the same: making distributed systems easier to operate and scale. In a world where microservices dominate modern architecture, service meshes are no longer optional luxuries—they are becoming foundational components of resilient cloud-native systems.